I stumbled across this awesome article today: Revving Up Your Hibernate Engine.

Well worth the read if you are or are planning to use Hibernate in the near future.

To quote the summary of the article:

"This article covers most of the tuning skills you’ll find helpful for your Hibernate application tuning. It allocates more time to tuning topics that are very efficient but poorly documented, such as inheritance mapping, second level cache and enhanced sequence identifier generators.

It also mentions some database insights which are essential for tuning Hibernate.

Some examples also contain practical solutions to problems you may encounter."

Friday, December 31, 2010

Friday, December 24, 2010

Find all the classes in the same package

It's been a slow month for blogging, due to the release of a new World of Warcraft expansion, crazy year end software releases at work and the general retardation of the human collective consciousness that happens this time of year.

With all of that I did however have some fun writing the following bit of code. We have a "generic" getter and setter test utility used to ensure a couple extra %'s of code coverage with minimal effort. That utility did however requires that you call it for every class you want to test, being lazy I wanted to just give it a class from a specific package and have that check all the classes.

I initially thought there would have to be just a simple way to do it with the Java reflection API. Unfortunately I didn't find any, it seems a "Package" does not keep track of it's classes, so after a bit of digging on the net and feverish typing (ignoring the actual project I am currently working on for a little) here is what I came up with. The tip is actually the just methods from the java.lang.Class object: "getProtectionDomain().getCodeSource().getLocation().toURI()", giving you the base to work from and I found that somewhere on Stackoverflow.

There are 2 public methods: findAllClassesInSamePackage and findAllInstantiableClassesInSamePackage. (For my purposes of code coverage I just needed the instantiable classes.)

Usage:

PackageClassInformationFinder:

With all of that I did however have some fun writing the following bit of code. We have a "generic" getter and setter test utility used to ensure a couple extra %'s of code coverage with minimal effort. That utility did however requires that you call it for every class you want to test, being lazy I wanted to just give it a class from a specific package and have that check all the classes.

I initially thought there would have to be just a simple way to do it with the Java reflection API. Unfortunately I didn't find any, it seems a "Package" does not keep track of it's classes, so after a bit of digging on the net and feverish typing (ignoring the actual project I am currently working on for a little) here is what I came up with. The tip is actually the just methods from the java.lang.Class object: "getProtectionDomain().getCodeSource().getLocation().toURI()", giving you the base to work from and I found that somewhere on Stackoverflow.

There are 2 public methods: findAllClassesInSamePackage and findAllInstantiableClassesInSamePackage. (For my purposes of code coverage I just needed the instantiable classes.)

Usage:

PackageClassInformationFinder:

Sunday, December 5, 2010

Enabling enterprise log searching - Playing with Hadoop

Having a bunch of servers spewing out tons of logs is really a pain when trying to investigate an issue. A custom enterprise wide search would just be one of those awesome little things to have and literally save developers days of their lives not to mention their sanity. The "corporate architecture and management gestapo" will obviously be hard to convince, but the chance to write and setup my own "mini Google wannabe" MapReduce indexing is just too tempting. So this will be a personal little project for the next while. The final goal will be distributed log search, using Hadoop and Apache Solr. My environment this mostly consists of log files from:

Weblogic Application server, legacy CORBA components, Apache, Tibco and then a mixture of JCAPS / OpenESB / Glasshfish as well.

Setting Up Hadoop and Cygwin

First thing to get up and running will be Hadoop, our environment runs on windows and there in lies the first problem. To run Hadoop on Windows you are going to need Cygwin.

Download: Hadoop and Cygwin.

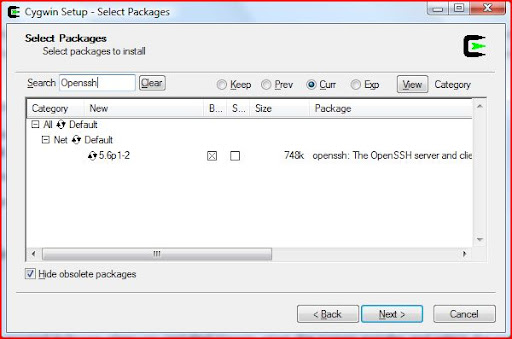

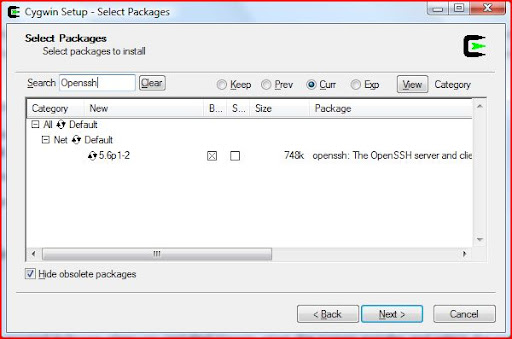

Install Cygwin, just make sure to include the Openssh package.

Once installed, using the Cygwin command prompt: ssh-host-config

This is to setup the ssh configuration, reply yes to everything except if it asks

"This script plans to use cyg_server, Do you want to use a different name?" Then answer no.

There seems to be a couple issues with regards to permissions between Windows (Vista in my case), Cygwin and sshd.

Note: Be sure to add your Cygwin "\bin" folder to your windows path (else it will come back and bite you when trying to run your first map reduce job)

and typical to Windows, a reboot is required to get it all working.

So once that is done you should be able to start the ssh server: cygrunsrv -S sshd

Check that you can ssh to the localhost without a passphrase: ssh localhost

If that requires passphrase, run the following:

ssh-keygen -t dsa -P '' -f ~/.ssh/id_dsa

cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

Now back to the Hadoop configuration:

Assuming that Hadoop was downloaded and unzipped into a working folder, ensure that the JAVA_HOME is set. Edit the [working folder]/conf/hadoop-env.sh.

The go into [working folder]/conf, and add the following to core-site.xml:

To mapred-site.xml add:

Go to the hadoop folder: cd /cygdrive/[drive]/[working folder]

format the dfs: bin/hadoop namenode -format

Execute the following: bin/start-all.sh

You should then have the following URLs available:

http://localhost:50070/

http://localhost:50030/

A Hadoop application is made up of one or more jobs. A job

consists of a configuration file and one or more Java classes, these will interact with the data that exists on the Hadoop distributed file system (HDFS).

Now to get those pesky log files into the HDFS. I created a little HDFS Wrapper class to allow me to interact with the file system. I have defaulted to my values (in core-site.xml).

HDFS Wrapper:

I also found a quick way to start searching the log file uploaded, is the Grep example included with Hadoop, and included it my HDFS test case below. Simple Wrapper Test:

Weblogic Application server, legacy CORBA components, Apache, Tibco and then a mixture of JCAPS / OpenESB / Glasshfish as well.

Setting Up Hadoop and Cygwin

First thing to get up and running will be Hadoop, our environment runs on windows and there in lies the first problem. To run Hadoop on Windows you are going to need Cygwin.

Download: Hadoop and Cygwin.

Install Cygwin, just make sure to include the Openssh package.

Once installed, using the Cygwin command prompt: ssh-host-config

This is to setup the ssh configuration, reply yes to everything except if it asks

"This script plans to use cyg_server, Do you want to use a different name?" Then answer no.

There seems to be a couple issues with regards to permissions between Windows (Vista in my case), Cygwin and sshd.

Note: Be sure to add your Cygwin "\bin" folder to your windows path (else it will come back and bite you when trying to run your first map reduce job)

and typical to Windows, a reboot is required to get it all working.

So once that is done you should be able to start the ssh server: cygrunsrv -S sshd

Check that you can ssh to the localhost without a passphrase: ssh localhost

If that requires passphrase, run the following:

ssh-keygen -t dsa -P '' -f ~/.ssh/id_dsa

cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

Now back to the Hadoop configuration:

Assuming that Hadoop was downloaded and unzipped into a working folder, ensure that the JAVA_HOME is set. Edit the [working folder]/conf/hadoop-env.sh.

The go into [working folder]/conf, and add the following to core-site.xml:

To mapred-site.xml add:

Go to the hadoop folder: cd /cygdrive/[drive]/[working folder]

format the dfs: bin/hadoop namenode -format

Execute the following: bin/start-all.sh

You should then have the following URLs available:

http://localhost:50070/

http://localhost:50030/

A Hadoop application is made up of one or more jobs. A job

consists of a configuration file and one or more Java classes, these will interact with the data that exists on the Hadoop distributed file system (HDFS).

Now to get those pesky log files into the HDFS. I created a little HDFS Wrapper class to allow me to interact with the file system. I have defaulted to my values (in core-site.xml).

HDFS Wrapper:

I also found a quick way to start searching the log file uploaded, is the Grep example included with Hadoop, and included it my HDFS test case below. Simple Wrapper Test:

Subscribe to:

Posts (Atom)

Building KubeSkippy: Learnings from a thought experiment

So, I got Claude Code Max and I thought of what would be the most ambitious thing I could try "vibe"? As my team looks after Kuber...

-

I make no claim to be a "computer scientist" or a software "engineer", those titles alone can spark some debate, I regar...

-

I have been messing around with AWS on little side projects and experiments for about the last year. I did find it quite a daunting experien...